Wie lernt ein Roboter das Tasten?

Interview mit Katherine J. Kuchenbecker über haptische Intelligenz

Das Interview wurde für die schriftliche Version sprachlich überarbeitet und gekürzt. Die längere Version hören Sie im Cyber Valley Podcast Direktdurchwahl, Folge 6: Feel the robot. Das Interview ist auf Englisch.

I would like you to concentrate on your hands. What do you feel right now?

Actually, my hands are a little bit cold because it's winter. But beyond that, the sensations in my hands are most fascinating to me when I bring my hands into contact with objects in the world. Our hands are exquisitely sensitive to all kinds of different mechanical information and motions. Through our hands we accomplish just about everything we do in the world, whether it's picking up a glass of water, typing on a keyboard, driving a car, or hugging a loved one. All that physical interaction with the world passes through our sense of touch.

When talking about the sense of touch, about “haptics”, what exactly do we talk about?

I usually divide haptics into two main categories. The first category is about what you feel with your skin. Skin has little cells, mechanoreceptors, pain receptors and more that measure and detect what's happening, for example if the air is cooling or something is pressing, hurting, vibrating, or shifting back and forth. All those sensations are flowing through your peripheral nervous system up to your brain. And in the same way that all the little parts of an image are put together, your brain is making sense of this constant stream of different tactile sensations.

The other half of haptics is more about motion: Where is your body in space? If you curl your hand around, say a spoon, you feel its smoothness, coldness and edges against your skin. But how are your fingers shaped around it? Knowing the pose of your hand helps you interpret what your skin feels. These two categories of touch work together to both give you control over your body to accomplish what you want and help you understand the things that you're touching and doing in the world.

If we build a robot with today’s technology, would its sense of touch be as good as that of a human?

Definitely not. There are huge limitations. Most robots, whether it's a robot in a factory or a robot helping in a hospital, have almost no sense of touch. They have cameras and can see the world around them, and maybe they have other sensors, a network connection, access to data. Often the robot knows about its own body pose, but it almost never has skin.

That's because it's very expensive and difficult to fabricate artificial skin to make it both sensitive and robust. When things touch each other, they tend to get damaged, especially if they have little wires. And you do not only measure if something is touching you and where, but the vibrations, the transients, and the changes, like texture sliding over your skin. All of that happens really fast. So, we need a lot of measurements every second; it is not easy to fit that in the skin of a robot!

But if the human skin is already better than anything we can build right now, why should we build it?

Humans can do many jobs, but some jobs are dangerous, for example a disaster rescue, with radiation, or tasks deep under the ocean. And perhaps a human can do a task

once, but if you're going to do it thousands of times, this might be harmful and not the most stimulating or interesting job for humans. My father is a surgeon, and over his entire lifetime, his wrists and hands have started hurting because he's done repetitive motions. Robots tend to be good at that. So where could we use a robot to help? And can we automate pieces of the workflow? But still, in my systems, a human is usually in control of what's happening.

When you're at a party, how do you briefly explain to someone - who is not a scientist - what you are doing?

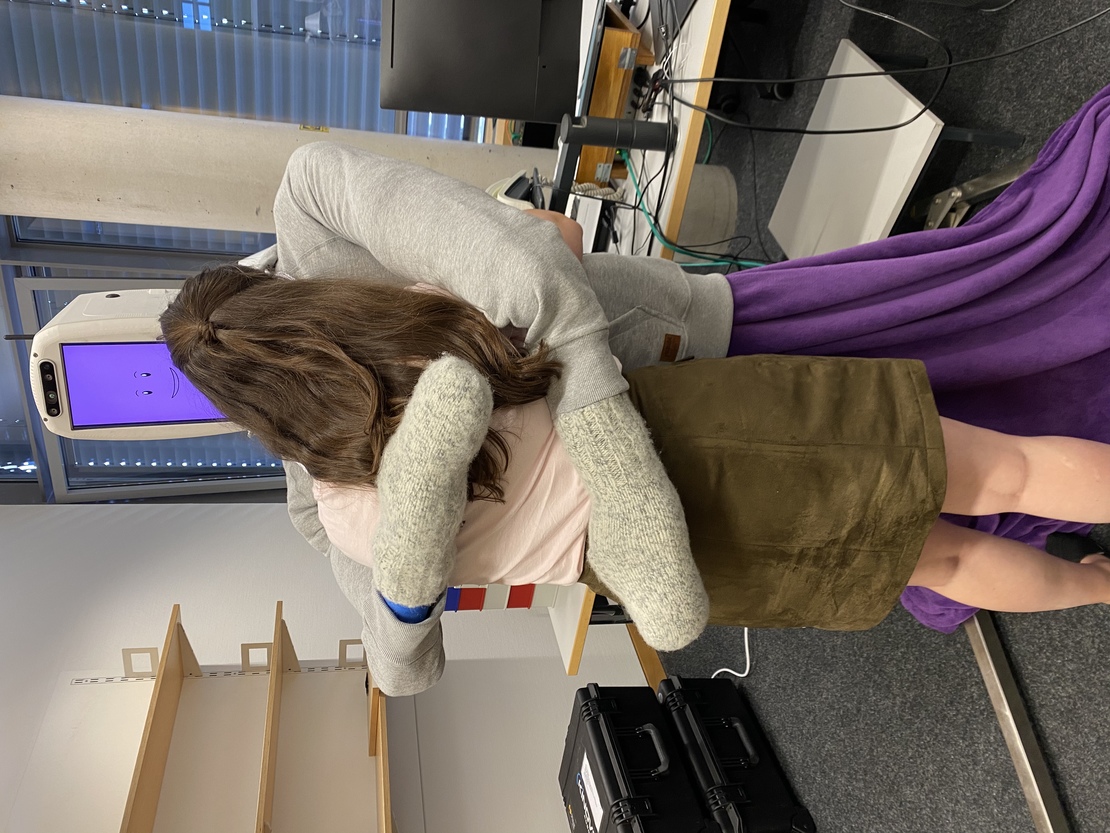

Sometimes I give an example. Some people have seen in the press some of the projects we've done. For example, HuggieBot, a robot that can give good hugs to humans.

Photo of HuggieBot; Max Planck Institute for Intelligent Systems

Why should it do that?

Creating an artificial system that simulates or replicates this interaction is highly beneficial. Hugs calm you down. They lower your stress, decrease your cortisol levels, and increase your oxytocin. During the pandemic we had to physically distance from our loved ones, but physical contact is a natural human need. By creating a hugging robot and adjusting how it behaves, we can test which factors are truly important. We varied how it physically reacted: We programed it to let go as soon as you let go, so it respects your wishes. We programed the robot also to proactively squeeze you gently. It was amazing to see how positively people responded then; they felt like the robot really cared about them.

So, do we want robots to touch us as human-like as possible and or do we want a new way of interacting with machines, so we are not that much emotionally connected to something without feelings or emotions?

Most of us have never hugged a robot before. This is the first robot of its kind, and I think you really have to try it to understand what it's like. In our studies, people actually hug much longer, like 20 seconds, whereas a typical hug with a friend is only two or three seconds long. We compared that to hugging a human. Many people mentioned feeling guilty about the actress we had hired to hug them. They said that she might feel uncomfortable at how long they would want to hug. With a robot, you don't have that. You don't have to worry about how the robot feels.

Haptic interaction is one way to interact with a robot. But you can also use speech, for example. Isn't it easier to just make the robot react to what you're saying it should do?

It seems counterintuitive to humans because we're all so good at tasks like chopping up a carrot. But it is actually much easier for a robot to hear you say “robot, please chop the carrot” versus actually physically chopping the carrot. First, you have a dangerous thing, a knife. And a carrot is a natural material that is different every time. We have not yet mastered haptic intelligence nearly to the level of understanding speech. A robot can also look at the world and better understand what it's seeing compared to touching things or manipulating objects in the world.

When I looked at your department’s research areas at the Max Planck Institute for Intelligent Systems and saw that one area was “understanding tactile contact”, I was actually a little bit surprised. I thought we already understand what touch is.

Yes, we take our sense of touch for granted. Even think about how clumsy you become if your hand goes to sleep or if the dentist applies an anesthetic locally. You almost cannot talk or drink because you don't have this feedback of how your body is moving, which is tightly connected to how you activate your muscles. I guess touch is an under-appreciated sense because we're all so good at it through practice. Babies and children gain this experience through direct practice, and it takes years. I mean, I would not trust my four-year-old niece to chop a carrot.

How would you describe your department “Haptic Intelligence”, at the intersection of robotics and machine learning?

That's close. We use machine learning in many aspects of our work, but I might say we're at the intersection of robotics and human-computer interaction. We're also trying to do curiosity-driven fundamental research to give some insights about how the human sense of touch works.

So where does the artificial intelligence come in?

AI and machine learning are used for interpreting the signals. Let’s say I have a sensor on a robot, a fingertip sensor. There's some transformation which is often nonlinear and complicated. That's where the pattern recognition capabilities of machine learning really help. I chose the name of my department to be “Haptic Intelligence” because I believe that to create intelligent robot systems, we need not only the software side, but also improvements on the physical side.

If someone wants to work in the field of tactile intelligence, what should they study?

You should always follow your heart. Try a few things early in your career. If you know someone who's a computer scientist, ask them what they do. I think I could have been very happy as a computer scientist or as an electrical or a biomedical engineer. All of my degrees are in mechanical engineering, but I took a lot of classes in these other disciplines. A professor at Stanford used to tell us that we should try to be shaped like the letter “T”: broad, but then specialized in one area. You need to be able to speak to the other experts in relevant areas, so you can really build complex systems that are not only mechanical, but also involve electronics and a computer program. To me, it's very meaningful to create these integrated systems. It's complicated, it's tricky, but it's also fascinating.

Darstellung eines Roboterarms. Graphic: Cyber Valley / Beiter

Video

Zugehörige Artikel

Pierre Schumacher receives RIG “Outstanding Doctoral Thes...

Cyber Valley Start-up Meshcapade draws Epic Games to Tübi...